Agentic Test Automation in action

AI-powered testing assistant purpose-built for Tosca to enable...

What is ETL testing? Data warehouse/BI infrastructure testing? BI application/report testing? What’s the difference between them?

Editor’s note: Wayne Yaddow is an independent consultant with over 20 years’ experience leading data migration/integration/ETL testing projects at organizations including J.P. Morgan Chase, Credit Suisse, Standard and Poor’s, AIG, Oppenheimer Funds, IBM, and Achieve3000. Additionally, Wayne has taught IIST (International Institute of Software Testing) courses on data warehouse, ETL, and data integration testing. He continues to lead numerous ETL testing and coaching projects on a consulting basis. You can contact him at wyaddow@gmail.com.

When referring to business intelligence quality assurance, we often discover that the terms data warehouse (DWH) testing and ETL Testing are used interchangeably as though they are one and the same.

Data warehouses can be defined as a collection of data that may include all of an organization’s data. They came into existence due to more focus from senior management on data as well as data-driven decision making (business intelligence). Historical collections of online transaction processing (OLTP) data, combined with continuous updating of current data for analysis and forecasting, is implemented to support management decisions. Since many organizational decisions depend on the data warehouse, the data should be of the highest quality.

To ensure that organizations make smart, accurate decisions, testing should be planned and executed very efficiently to avoid erroneous data being pumped into the database—then ultimately obfuscating senior management’s decision-making process.

This article summarizes three testing strategies often associated with business intelligence quality assurance: ETL testing, data warehouse/BI infrastructure testing, and BI application/report testing. In doing so, it aims to clarify distinctions among these three primary categories of testing. Considerable research has proven how these strategies increase productivity and ensure accurate data flow into the final warehouse and BI reports. Following these approaches is a reliable way to prevent data-integrity issues from undermining the business value of the data warehouse.

ETL testing is a sub-component of overall DWH testing. A data warehouse is essentially built using data extractions, data transformations, and data loads. ETL processes extract data from sources, transform the data according to BI reporting requirements, then load the data to a target data warehouse. Figure 1 shows the general components involved in the ETL process.

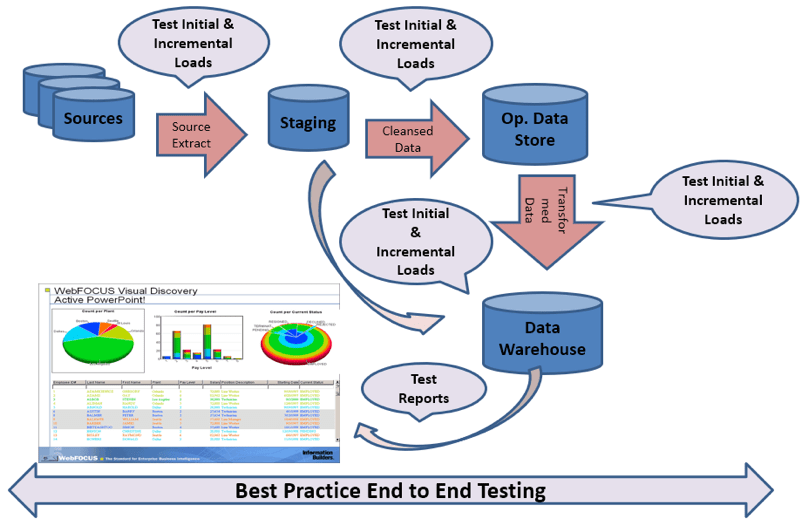

Figure 1: ETL testing for data staging, data cleansing, and DWH loads

After selecting data from the sources, ETL procedures resolve problems in the data, convert data into a common model appropriate for research and analysis, and write the data to staging and cleansing areas—then finally to the target data warehouse. Among the four components presented in Figure 1, the design and implementation of the ETL process requires the largest effort in the development life cycle. ETL’s processes present many challenges, such as extracting data from multiple heterogeneous sources involving different data models, detecting and fixing a wide variety of errors/issues in data, then transforming the data into different formats that match the requirements of the target data warehouse.

A data warehouse keeps data gathered and integrated from different sources and stores the large number of records needed for long-term analysis. Implementations of data warehouses use various data models (such as dimensional or normalized models), and technologies (such as DBMS, Data Warehouse Appliance (DWA), and cloud data warehouse appliances).

ETL testing includes different types of testing for its three different processes (extract, transform, load).

Data extraction tests might check that:

Data transformation tests might check that:

Data loading testing might check that:

Several components of DWH development and test are not usually components of the ETL tool or stored procedures that may be used in the ETL process – therefore, testing these processes will be accomplished independent of ETL tests.

For example, this includes use of tools to profile data sources for format and content issues, checks for missing source data/records, DWH security, etc. These categories of testing can be considered “DWH infrastructure verifications.”

“DWH/BI infrastructure” generally consists of:

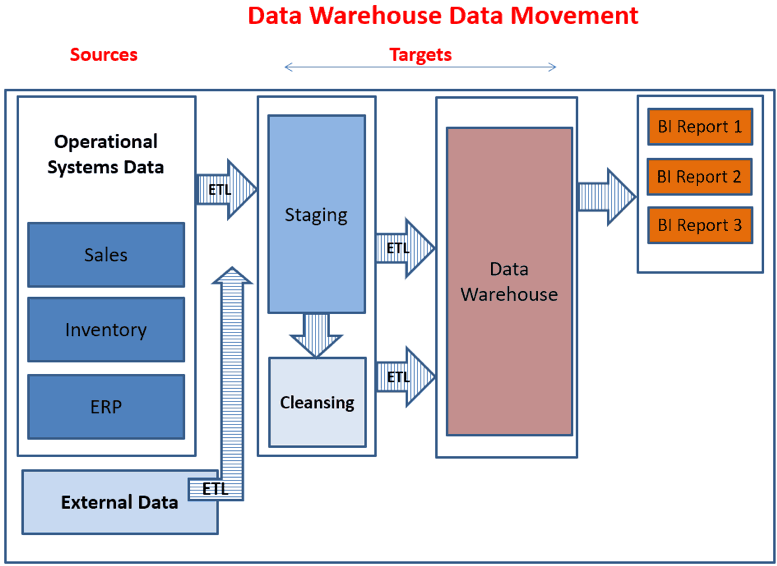

DWH/BI infrastructure components must be tested for (among other things) scalability, security, reliability, and performance (e.g., with load and stress tests). DWH/BI infrastructure as a whole supports data warehouse data movement as shown in Figure 2.

Figure 2: The data warehouse infrastructure supports all DWH, ETL, and BI Functions

Data warehouse infrastructure basically supports a data warehousing environment with the aid of many technologies.

Front-end BI applications are often desktop, web, and/or mobile applications and reports. They include analysis and decision support tools, and online analytical processing (OLAP) report generators. These applications make it easy for end-users to construct complex queries for requesting information from data warehouses—without requiring sophisticated programming skills.

End user reporting is a major component of any business intelligence project. The report code may execute aggregate SQL queries against the data stored in data marts and/or the operational DW tables, then display results in the required format (either in a web browser or on a client application interface).

For each type of report, there are several types of tests to be considered:

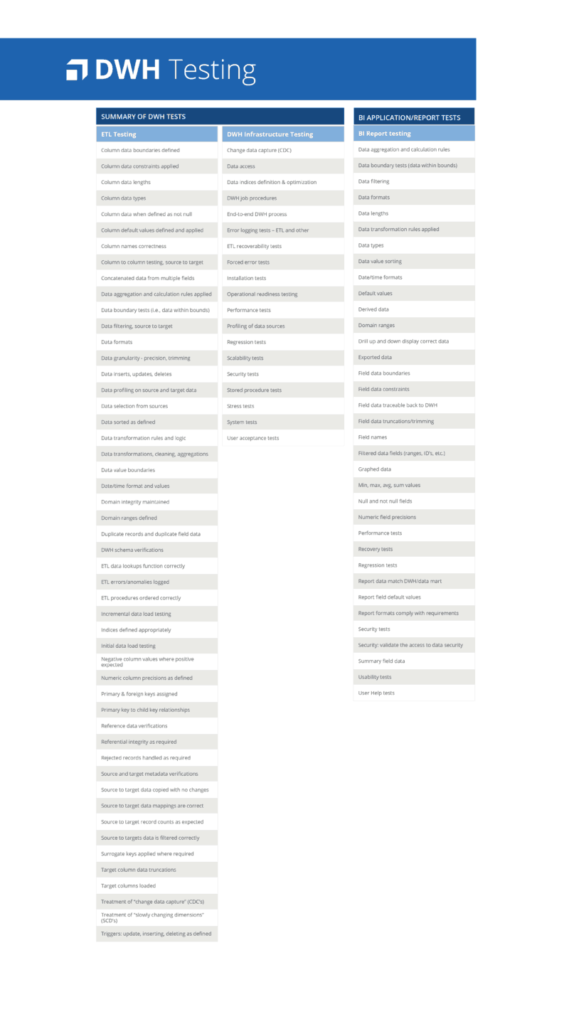

The following graphic lists categories of tests that should be considered for DWH and BI report testing. From this list, those planning DWH/ETL/BI tests can select and prioritized the types of testing they will/should perform during each phase of testing during a project.

AI-powered testing assistant purpose-built for Tosca to enable...

We’re extending our proven expertise in test automation with...

Learn how to build a Workday testing strategy that minimizes...

Connect NeoLoad with Datadog to unlock real-time observability and...

Learn how a top European bank drove Agile transformation, tackled...