Last spring, my team hit a breaking point in regression. Our UI shifted twice a week, selectors broke nightly, and honestly, the team spent more time repairing tests than validating new features.

The pivot that worked wasn’t another framework. It was a change in how tests got executed: let an agent read intent, act like a user, and adapt when the UI moves.

What is agentic testing?

TL;DR: Agentic testing uses autonomous AI agents to execute tests from intent, adapt to UI changes, and report results without relying on fixed scripts. It shifts testing from rigid step execution to goal-driven autonomous action.

Agentic testing is a testing approach where autonomous AI agents plan and execute tests from intent, observe outcomes, and adapt their next steps without relying on fixed scripts. The goal stays stable while the agent chooses the best actions to reach it.

Agentic capabilities are the planning, tool use, and feedback loops that let an AI system decide, act, and learn within guardrails. In QA, that translates to tests that can recover from UI drift, choose alternate paths, and report results in human-friendly language.

Software testing still anchors the mission here. As Jason Arbon, CEO of Checkie.ai, put it, “The future of testing isn’t about writing more tests, it’s about letting intelligent agents explore your application the way real users would, finding the bugs that scripted tests miss.”

This mindset shift is what makes agentic testing different. Agentic testing turns test intent into autonomous action.

The future of testing isn’t about writing more tests, it’s about letting intelligent agents explore your application the way real users would, finding the bugs that scripted tests miss.

How agentic testing differs from traditional test automation

TL;DR: Traditional automation follows fixed scripts and struggles with UI changes, while agentic testing executes from intent and adapts when interfaces shift. The focus moves from brittle step authoring to defining goals and outcomes.

Traditional automation is powerful when systems are stable and change is slow. But let’s be real, how often is that the case anymore? Agentic testing shifts the work from writing brittle steps to defining goals and outcomes.

| Area | Traditional Automation | Agentic Testing |

|---|---|---|

| Authoring | Scripted steps and selectors | Intent and expected outcomes |

| Maintenance | High when UI changes | Lower when UI shifts |

| Execution | Fixed path | Adaptive path |

| Best for | Stable flows | High-variance user journeys |

| Failure handling | Stops on unexpected UI | Attempts alternate paths |

A clean test suite is still required, though. Agentic testing doesn’t excuse unclear requirements or unstable environments. It just reduces the tax of change.

Agentic testing vs. AI-assisted testing

TL;DR: AI-assisted testing helps humans generate or summarize test artifacts, whereas agentic testing executes and adjusts tests autonomously at runtime. In short, AI-assisted helps you write, agentic helps you run.

This distinction trips people up a lot. AI-assisted testing helps humans write tests faster or summarize results. Agentic testing makes the AI responsible for executing and adjusting the test itself.

AI-assisted testing…

- Suggests or generates artifacts, whereas agentic testing acts.

- Helps at authoring time, whereas agentic testing helps at run time.

A short way to remember it: AI-powered helps you write. Agentic helps you run.

How agentic testing works in practice

TL;DR: Agentic testing follows a loop where intent is defined, actions are planned, execution is performed, and results are adapted and reported. The agent observes changes and adjusts paths rather than stopping on unexpected UI behavior.

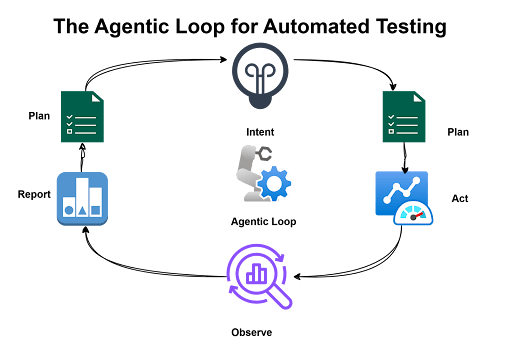

In most implementations we’ve seen, an agentic loop looks something like this:

- Intent is defined. A tester provides a goal and expected outcomes.

- The agent plans actions. It maps the goal into UI or API steps.

- The agent acts and observes. It clicks, types, and reads the UI like a real user would.

- The agent adapts and reports. If the UI changes, it retries or finds a new path, then summarizes results.

It’s not magic, but it feels close when you see a test recover from a button moving three pixels to the left.

Key capabilities and benefits of agentic testing

TL;DR: Agentic testing enables intent-based execution, UI resilience, natural language reporting, and reduced maintenance overhead. It shifts effort from repairing brittle scripts to expanding test coverage.

Here are the capabilities we see driving adoption in modern QA teams:

- Intent-based execution. Tests are expressed as goals, not brittle step lists.

- UI resilience. Agents can recover when labels move or selectors change.

- Natural language reporting. Results are readable by leads and non-testers.

- Lower maintenance cost. Less time spent updating selectors and scripts.

The short takeaway: agentic testing shifts effort from repair to coverage.

Common agentic testing use cases

TL;DR: Agentic testing is best suited for high-change, high-value user journeys like checkout, onboarding, and exploratory smoke validation. It delivers the most value where traditional UI automation tends to be fragile.

Agentic testing doesn’t replace your entire pyramid. It shines where stability is low and feedback matters most.

- Critical user journeys. Checkout, onboarding, subscription changes.

- High-change UI areas. Marketing pages, configuration screens, feature flags.

- Exploratory smoke coverage. Quick validation after a deployment.

We’ve seen teams get the most value at the top of the pyramid, where traditional automation tends to be most fragile anyway.

Getting started with agentic testing

TL;DR: Start with a small pilot on one high-value flow, clearly define intent and assertions, stabilize data, and scale gradually. Successful adoption builds confidence incrementally rather than attempting broad rollout immediately.

If you’re new to agentic testing, start with a scoped pilot. A simple path works best:

- Pick one high-value flow. Choose a journey that breaks often and affects revenue.

- Define intent clearly. Write the goal and acceptance criteria in plain language.

- Stabilize test data. Provide accounts and datasets the agent can safely use.

- Run in a controlled environment. Start in staging, then expand to pre-production.

- Scale slowly. Add two or three more flows once confidence is earned.

Don’t try to boil the ocean on day one. The teams that succeed start small and build trust incrementally.

Best practices for implementing agentic testing

TL;DR: Clear intent, explicit assertions, strong observability, guardrails, and isolated test data are essential for trustworthy agentic execution. Autonomy must be paired with control and visibility.

We’ve learned a few rules that keep adoption smooth and outcomes trustworthy:

- Write intent like a user story. Ambiguous goals lead to unpredictable paths.

- Add explicit assertions. Tell the agent what “done” looks like.

- Instrument the run. Collect logs, screenshots, and checkpoints.

- Use guardrails. Limit scope so agents don’t wander into risky areas.

- Keep data isolated. Reset accounts or seed data between runs.

Autonomy without observability is just automation you can’t trust.

Autonomy without observability is just automation you can’t trust.

Challenges and risks to plan for

TL;DR: Vague prompts, unstable environments, data collisions, and overreliance on AI can undermine agentic testing effectiveness. Planning for these risks early prevents unreliable results.

Agentic testing isn’t magic. These are the friction points we see most often:

- Overly vague prompts. The agent needs intent that is specific enough to execute.

- Unstable environments. Flaky staging will produce flaky agent results.

- Data collisions. Agents move fast and can exhaust test data if you don’t reset.

- Overreliance on AI. Some teams stop thinking critically about test design once agents take over. That’s a mistake.

Plan for these up front and you’ll save yourself a lot of headaches later.

How agentic technology applies to modern QA

TL;DR: Agentic testing aligns with modern QA’s need for speed and adaptability by elevating test design to goal definition and enabling adaptive execution. It enhances tester leverage rather than replacing human expertise.

Modern QA is about speed and adaptability. Agentic technology fits because it aligns with how teams already work: rapid changes, continuous delivery, and a constant need for fast feedback.

- Test design moves up a level. Testers define goals, boundaries, and success criteria instead of brittle steps.

- Execution becomes adaptive. The agent handles UI drift so QA can keep pace with product teams.

- Feedback is easier to share. Agent reports are readable by developers, QA leads, and executives.

This is why agentic testing feels less like a new tool and more like a modern QA operating model. It’s not replacing testers, but rather giving them better leverage.

Agentic testing is not replacing testers, but rather giving them better leverage.

How Tricentis supports agentic testing

TL;DR: Tricentis positions agentic testing within a broader AI-driven platform that connects intent to execution, reduces maintenance, and improves delivery confidence at enterprise scale.

Tricentis positions agentic testing as part of a broader AI-driven testing platform. The focus is on using AI to connect test intent to execution, reduce maintenance, and improve confidence across the software delivery life cycle. If you’re exploring this space, it’s worth seeing how the pieces fit together.

See how Tricentis can enable AI-driven testing and modernize quality across your enterprise.

This post was written by Rollend Xavier. Rollend is a senior software engineer and a freelance writer. He has over 18 years of experience in software development and cloud architecture, and he is based in Perth, Australia. He’s passionate about cloud platform adoption, DevOps, Azure, Terraform, and other cutting-edge technologies. He also writes articles and books to share his knowledge and insights with the world. He is the author of the book Automate Your Life: Streamline Your Daily Tasks with Python: 30 Powerful Ways to Streamline Your Daily Tasks with Python.